5. Conditional Predictive Ordinate

From lecture, we learned that the CPO can be defined as:

\[\begin{equation*}

\text{CPO}_{i} = f(y_{i}\vert y_{-i})

=\int_{\Theta}f(y_{i}\vert \theta)\pi(\theta \vert y_{-i})d\theta

\end{equation*}\]

Where \(y_{-i}\) denotes all of the data exclusive of the \(i\)-th observation . Here, we will derive the formula for CPO (somewhat rigorously) and allude to how it is well-calculated from an appropriate number of posterior samples (less rigorously).

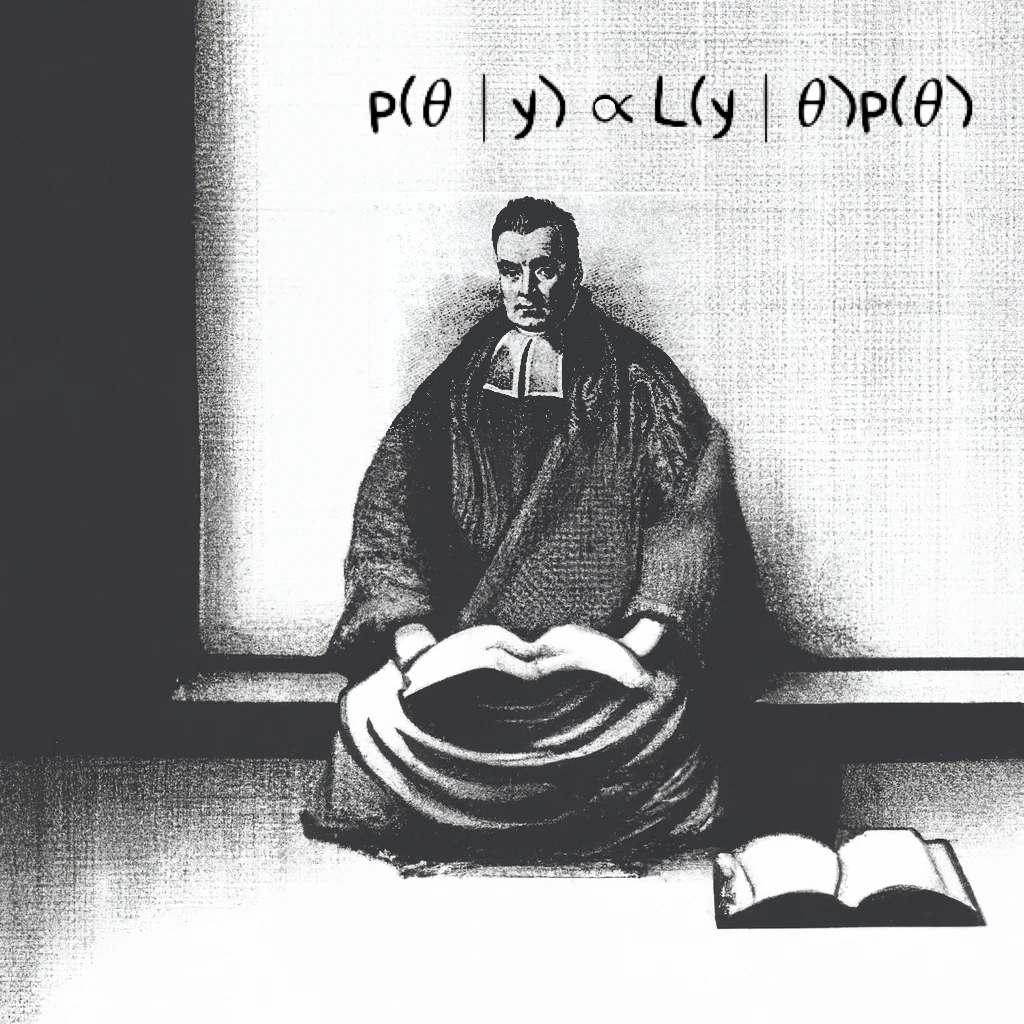

Starting with Bayes’ Theorem, and noting that the likelihood term \(p(y\vert \theta)\) factorizes into \(p(y_{i}\vert \theta)\cdot p(y_{-i}\vert \theta)\)

\[\begin{equation*}

p(\theta \vert y) = \frac{p(y\vert \theta) p(\theta)}{p(y)}

= \frac{p(y_{i}\vert \theta)p(y_{-i}\vert \theta)p(\theta)}{p(y)}

\end{equation*}\]

Which gives us:

\[\begin{equation*}

p(y_{-i}\vert \theta) = \frac{p(y)p(\theta \vert y)}{p(y\vert \theta)p(\theta)}

\end{equation*}\]

and

\[\begin{equation*}

p(\theta \vert y_{-i}) = \frac{p(y_{-i}\vert \theta)p(\theta)}{p(y_{-i})}

\end{equation*}\]

The ratio \(\frac{p(\theta \vert y_{-i})}{p(\theta \vert y)}\) is helpful here:

\[\begin{align*}

\frac{p(\theta \vert y_{-i})}{p(\theta \vert y)} &=

\frac{\frac{p(y_{-i}\vert \theta)\cancel{p(\theta)}}{p(y_{-i})}}{\frac{p(y\vert \theta)\cancel{p(\theta)}}{p(y)}}

=\frac{p(y_{-i}\vert \theta)p(y)}{p(y\vert \theta)p(y_{-i})}\\

&=\frac{p(y)}{p(y_{-i})}\cdot\frac{\cancel{p(y_{-i}\vert \theta)}}{p(y_{i}\vert \theta)\cancel{p(y_{-i}\vert \theta)}}\\

\frac{p(\theta \vert y_{-i})}{p(\theta \vert y)}

&= \frac{p(y)}{p(y_{-i})}\cdot\frac{1}{p(y_{i}\vert \theta)}\\

\frac{p(\theta \vert y_{-i})}{\cancel{p(\theta \vert y)}} \cancel{\textcolor{blue}{p(\theta\vert y)}}

&= \frac{p(y)}{p(y_{-i})}\cdot\frac{1}{p(y_{i}\vert \theta)}\textcolor{blue}{p(\theta\vert y)}\\

p(\theta \vert y_{-i}) &= \frac{p(y)}{p(y_{-i})}\cdot\frac{1}{p(y_{i}\vert \theta)}p(\theta\vert y)\\

%first integral line

\int_{\Theta}p(\theta \vert y_{-i})d\theta &= \frac{p(y)}{p(y_{-i})}\cdot \int_{\Theta}\frac{1}{p(y_{i}\vert \theta)}p(\theta\vert y)d\theta

\end{align*}\]

Since the left-hand side above is a probability distribution integrated over the entire parameter space:

\[\begin{align*}

1 &= \frac{p(y)}{p(y_{-i})}\cdot \int_{\Theta}\frac{1}{p(y_{i}\vert \theta)}p(\theta\vert y)d\theta\\

\end{align*}\]

(1)\[\begin{equation}

\frac{p(y_{-i})}{p(y)} = \int_{\Theta}\bigg[ \frac{1}{p(y_{i}\vert \theta)} \bigg]\cdot p(\theta \vert y)d\theta

\end{equation}\]

The right-hand side of equation (1) should remind you of LOTUS (Law of The Unconscious Statistician): It’s just an expectation. Recall that for a continuous random variable \(X\) with density function \(p(x)\), if \(Y=g(x)\) then:

\[\begin{equation*}

\mathbb{E}[Y]=\mathbb{E}[g(x)]=\int_{-\infty}^{\infty}g(x)\cdot p(x)dx

\end{equation*}\]

To clarify that the density function for the expectation is a posterior, we use the following notation:

\[\begin{equation*}

\frac{p(y_{-i})}{p(y)} = \mathbb{E}_{\theta \sim p(\theta \vert y)}\bigg[ \frac{1}{p(y_{i}\vert \theta)} \bigg]

\end{equation*}\]

In other words, the right-hand side of (1) represents the expectation of \(\frac{1}{p(y_{i}\vert \theta)}\) over \(\theta\) when \(\theta\) is distributed according to \(p(\theta \vert y)\). Taking the reciprocal of both sides gives:

(2)\[\begin{equation}

\frac{p(y)}{p(y_{-i})} =

\bigg( \mathbb{E}_{\theta \sim p(\theta \vert y)}\bigg[ \frac{1}{p(y_{i}\vert \theta)} \bigg] \bigg)^{-1}

\end{equation}\]

Let’s reframe \(p(y)\). It’s the marginal distribution of all the data, but we could just as well think of it as the joint distribution of \(y_{i}\) and \(y_{-i}\): they’re one and the same. This will allow us to apply the definition of conditional probability

\[\begin{equation*}

p(y) = p(y_{i} , y_{-i}) = \textcolor{blue}{p(y_{i}\vert y_{-i})}p(y_{-i})

\end{equation*}\]

Notice that the blue factor is the \(\text{CPO}_{i}\) defined at the beginning. Since (2) can be re-written as:

\[\begin{align*}

\frac{p(y_{i}\vert y_{-i})\cancel{p(y_{-i})}}{\cancel{p(y_{-i})}} &=

\bigg( \mathbb{E}_{\theta \sim p(\theta \vert y)}\bigg[ \frac{1}{p(y_{i}\vert \theta)} \bigg] \bigg)^{-1}\\

p(y_{i}\vert y_{-i})&=

\bigg( \mathbb{E}_{\theta \sim p(\theta \vert y)}\bigg[ \frac{1}{p(y_{i}\vert \theta)} \bigg] \bigg)^{-1}\\

&=\text{CPO}_{i}\blacksquare

\end{align*}\]

We can estimate this using:

\[\begin{equation*}

\hat{\text{CPO}}_{i}=

\bigg(

\frac{1}{B}\sum_{b=1}^{B}\frac{1}{f(y_{i}\vert \theta^{(b)})}

\bigg)^{-1}

\end{equation*}\]

Where \(\theta^{(b)}\) is an individual posterior sample.

For each posterior sample \(\theta^{(b)}\):

a. Evaluate the likelihood at the same \(y_{i}\) but use the posterior sample \(\theta^{(b)}\) as the parameter value.

b. Take the reciprocal of the calculation above.

Take the mean of all values from step (1)

Take the reciprocal of the value from step (2), this is the final calculation for \(\text{CPO}_{i}\)