1. Missing Data#

Taxonomy of Missing Data#

Missing completely at random (MCAR)

Missingness does not depend on observed or unobserved data.

Missing at random (MAR)

Missingness depends only on observed data

Missing not at random (MNAR)

Neither MCAR nor MAR hold, missingness may depend on the data that is missing, say the magnitude

MCAR and MAR are considered ignorable missingness, while MNAR is considered non-ignorable.

Multiple imputations#

Single imputations of missing values are not preferred because they do not estimate the error correctly or account for the uncertainty in imputed data. Multiple imputations is a method developed by Rubin.

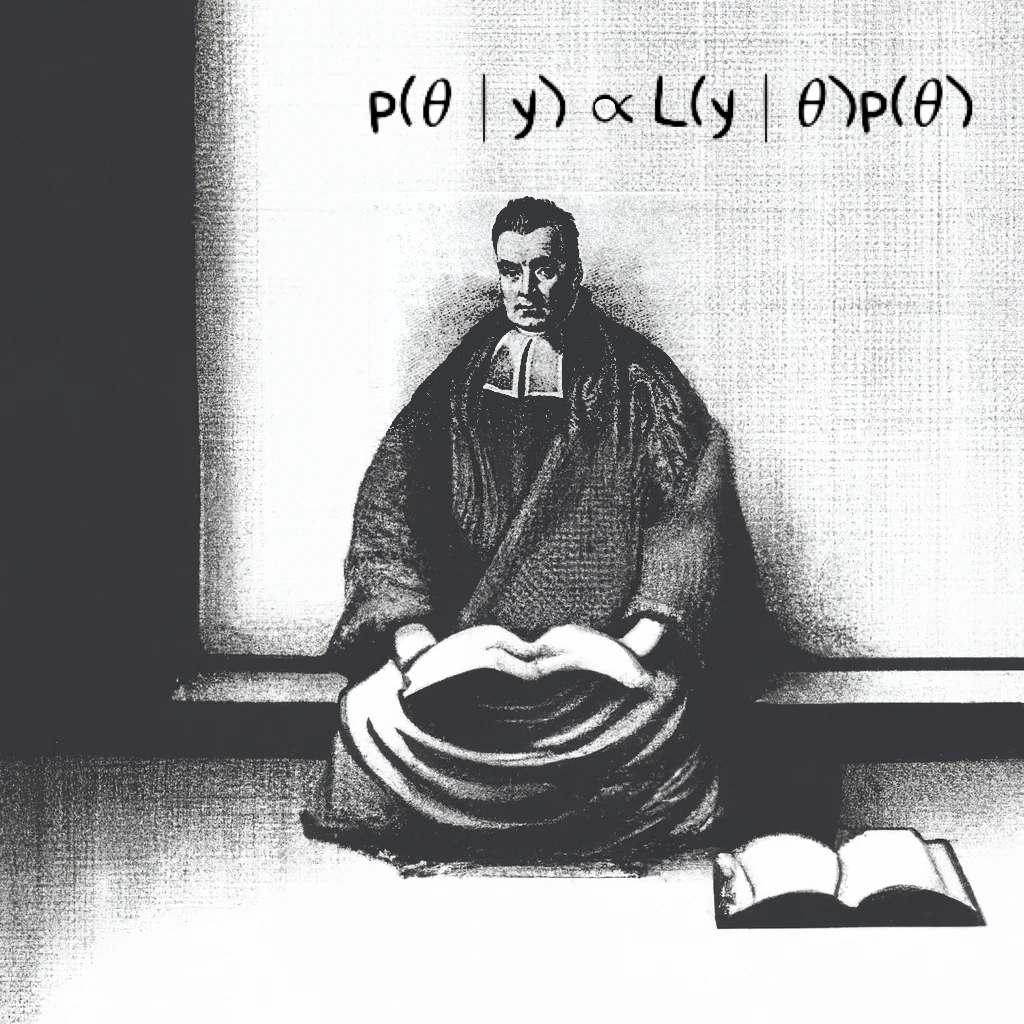

The actual posterior distribution of \(\theta\) is:

i.e. (actual posterior distribution of \(\theta\)) = AVE(complete posterior distribution of \(\theta\))

Posterior mean of \(\theta\):

i.e. (posterior mean of \(\theta\)) = AVE(repeated complete data posterior means of \(\theta\))

Posterior variance is:

i.e. (posterior variance of \(\theta\)) = AVE(repeated complete data variances of \(\theta\)) + Var(repeated complete data posterior means of \(\theta\))

PyMC Implementation#

Luckily, PyMC automatically takes care of imputations for missing observed (or ‘y’) values, when they are encoded is NaN. PyMC provides samples for each missing value, from which we can calculate stats like mean, variance, and HDI.

The next page has examples of ignorable missingness (Models 1 and 2), followed by Model 3 with non-ignoarable missingness.

Model 1: Imputing observed (or y/response) values

Model 2: Imputing predictors (or x) values

Model 3: y is missing depending on size

To lay the groundwork, this is a linear regression model with slope and intercept (\(\alpha\) and \(\beta\)) coefficients for each subject in the experiment.

In Model 2, we need to provide a prior distribution for the missing x’s, which is \(N (20,5^2)\). In Model 3, the probability of missingness depends on size, specified by: